Americans for Responsible Innovation urges US to vet AI models for government contracts

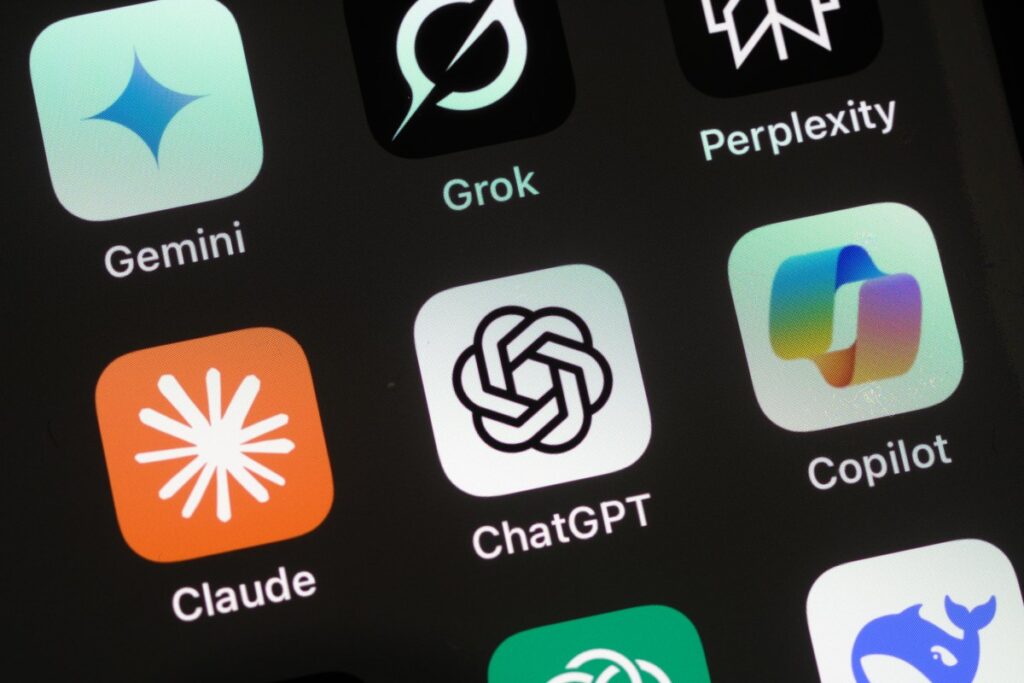

Americans for Responsible Innovation, an advocacy group focused on AI policy, is pushing for a straightforward rule: if an AI lab wants to sell its frontier models to the US government, it should have to pass a safety review first.

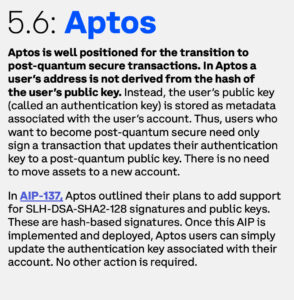

What ARI is actually asking for

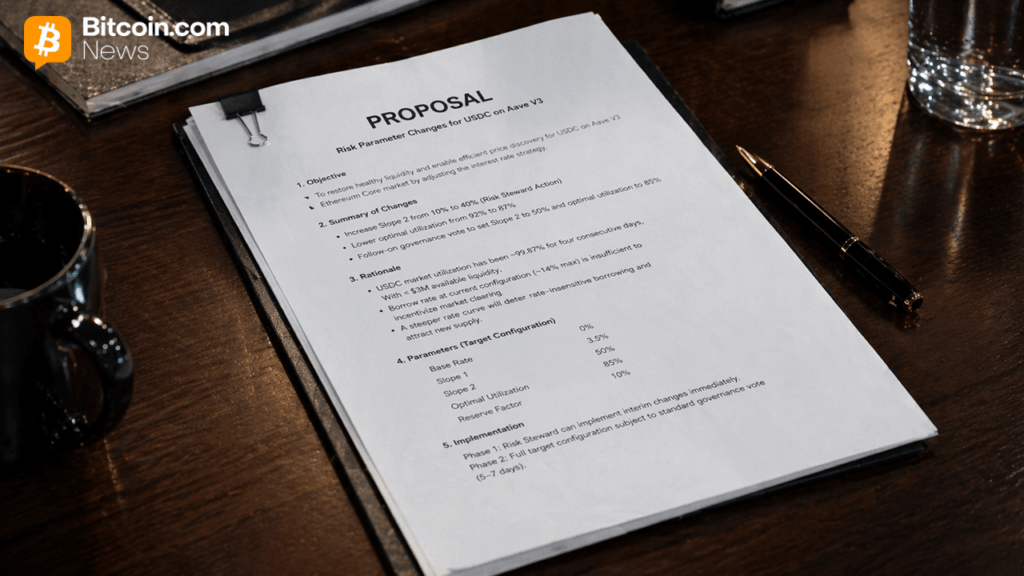

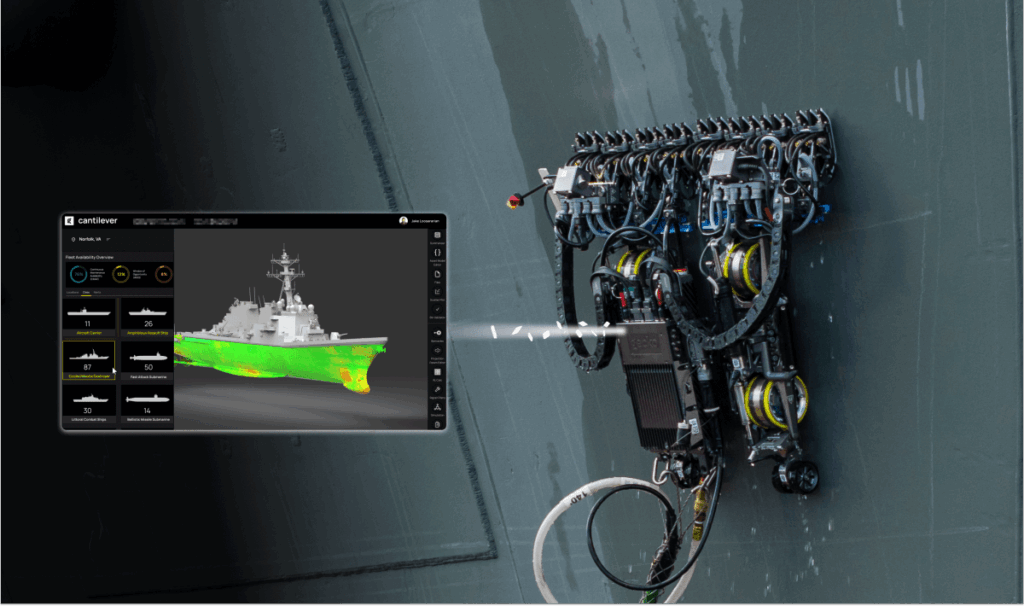

The group’s recommendations center on three pillars: mandatory pre-deployment testing for AI models used in government work, comprehensive reporting requirements for larger AI systems, and federal oversight mechanisms that go beyond the current patchwork of voluntary pledges.

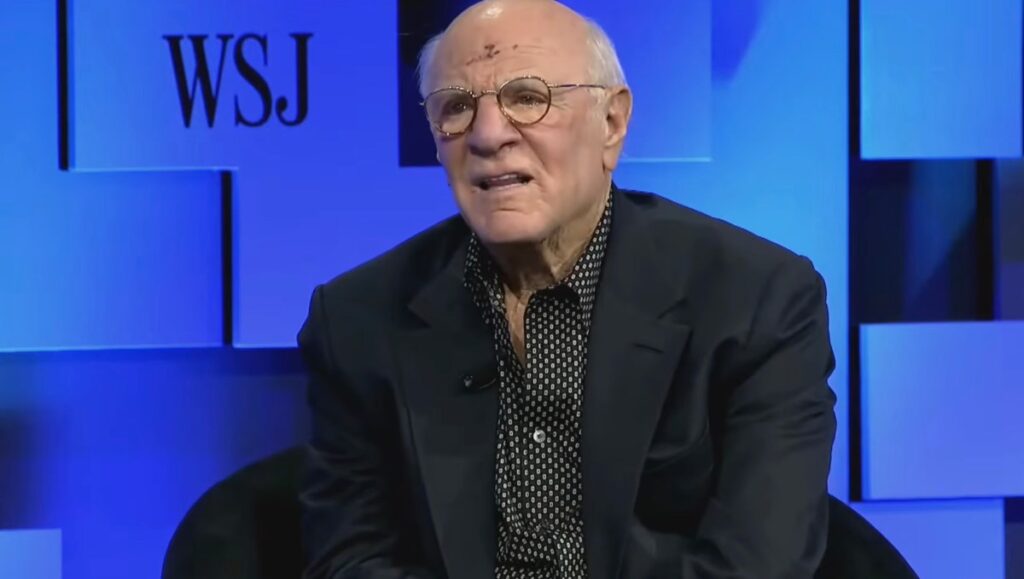

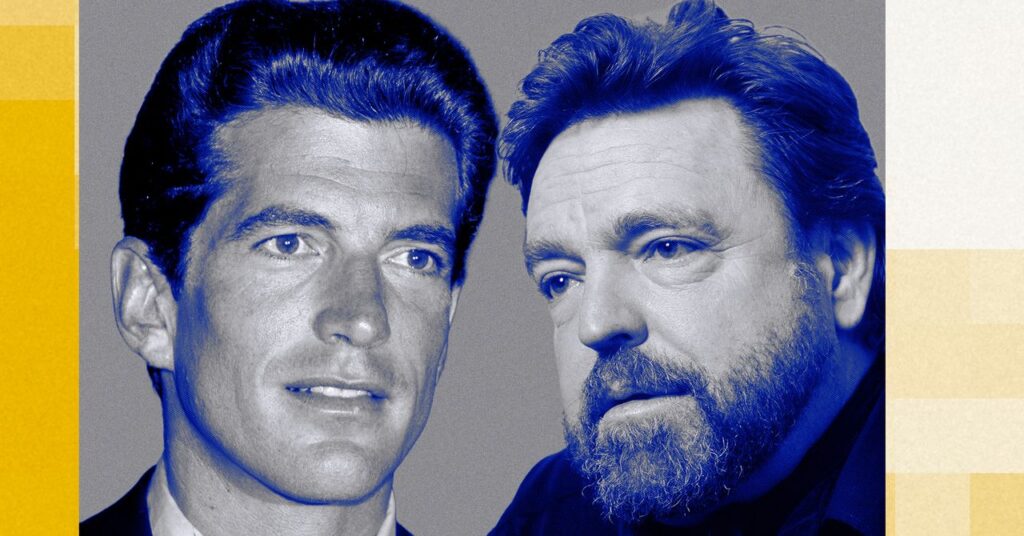

Brad Carson, ARI’s president, has expressed skepticism about voluntary commitments for AI safety.

ARI advocates specifically for proactive safety measures rather than the current approach of trying to hold companies accountable after something goes wrong.

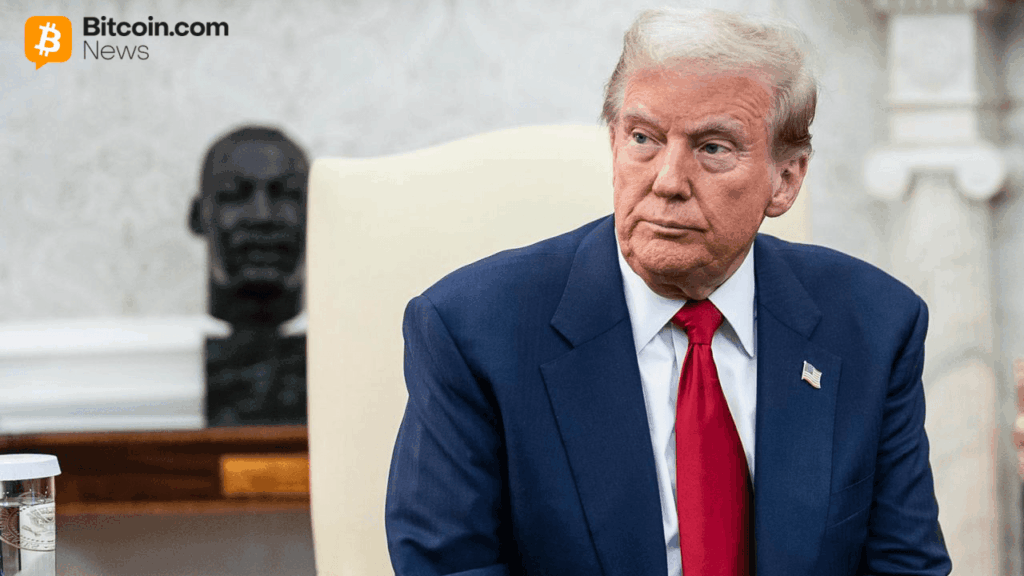

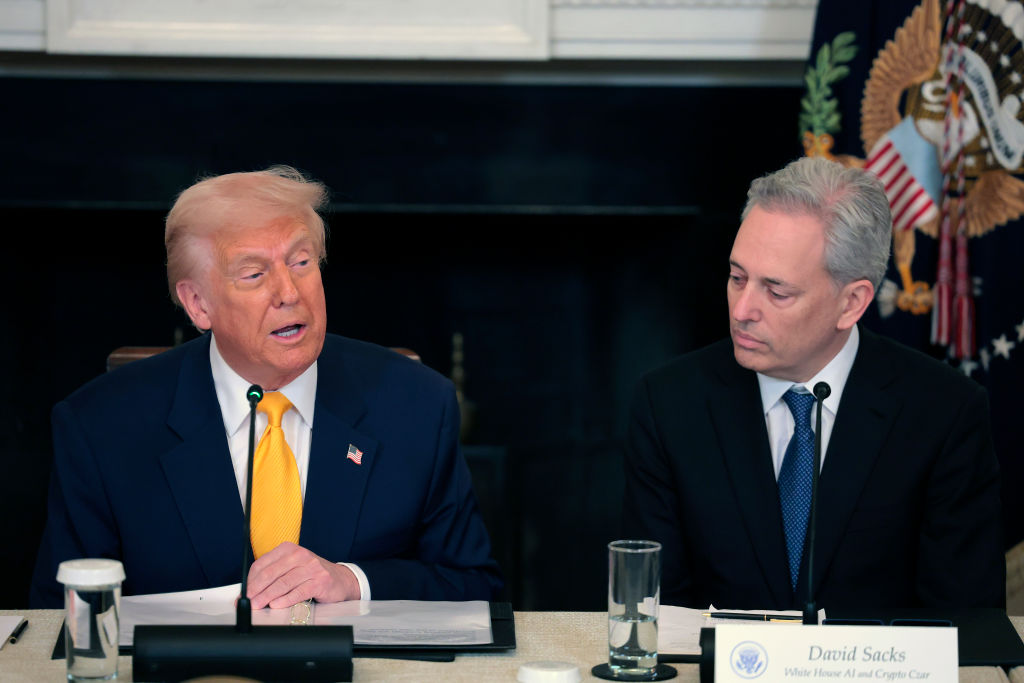

The bigger picture in Washington

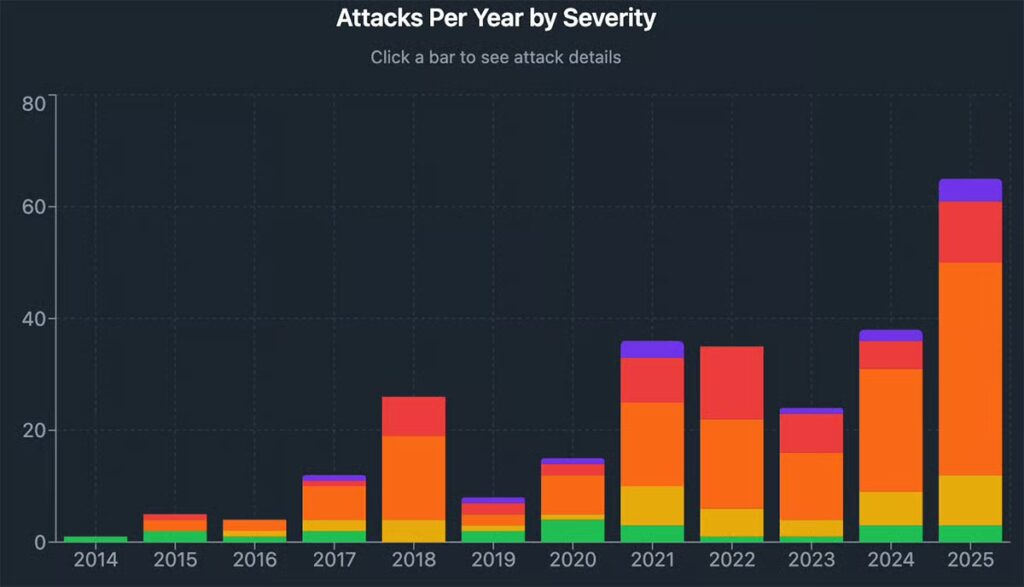

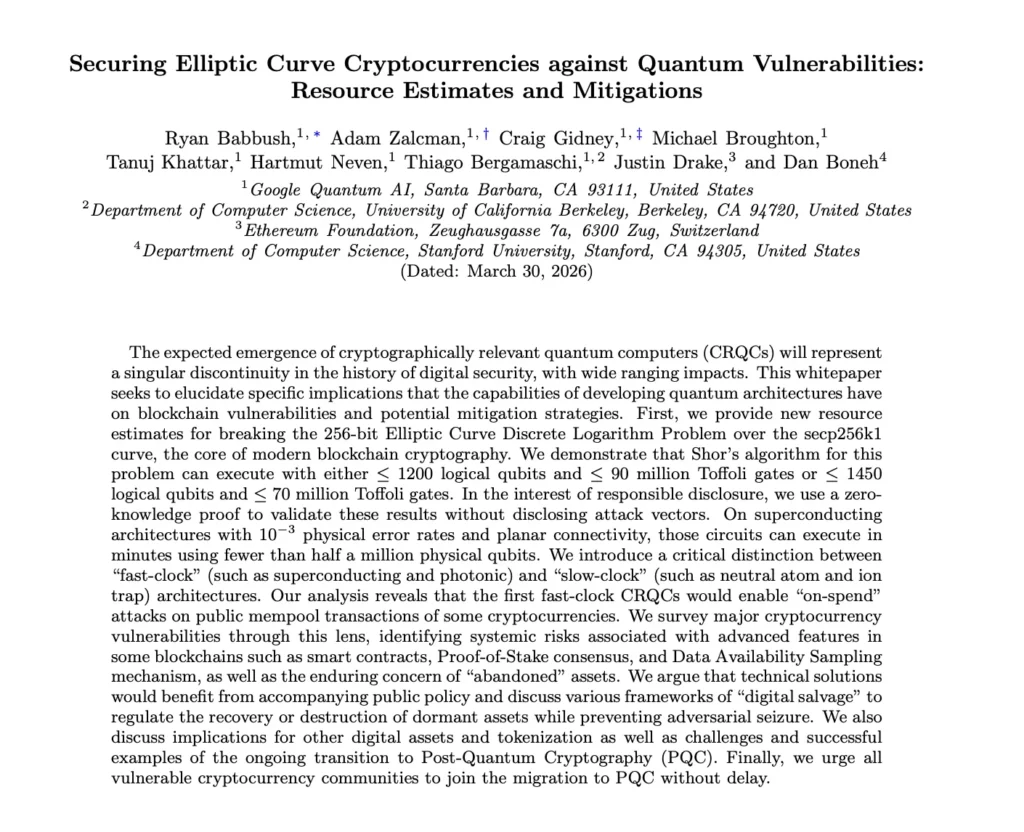

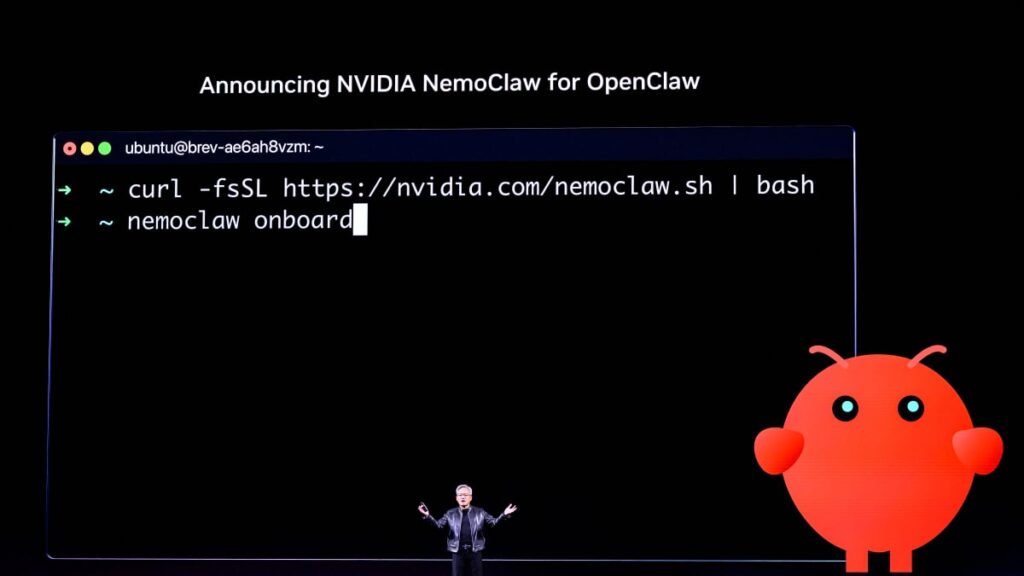

ARI’s push arrives alongside a broader wave of AI oversight activity in the federal government. A White House executive order is reportedly being drafted that would require government approval before releasing advanced AI models. The catalyst, at least in part, stems from cybersecurity concerns related to Anthropic’s Mythos model.

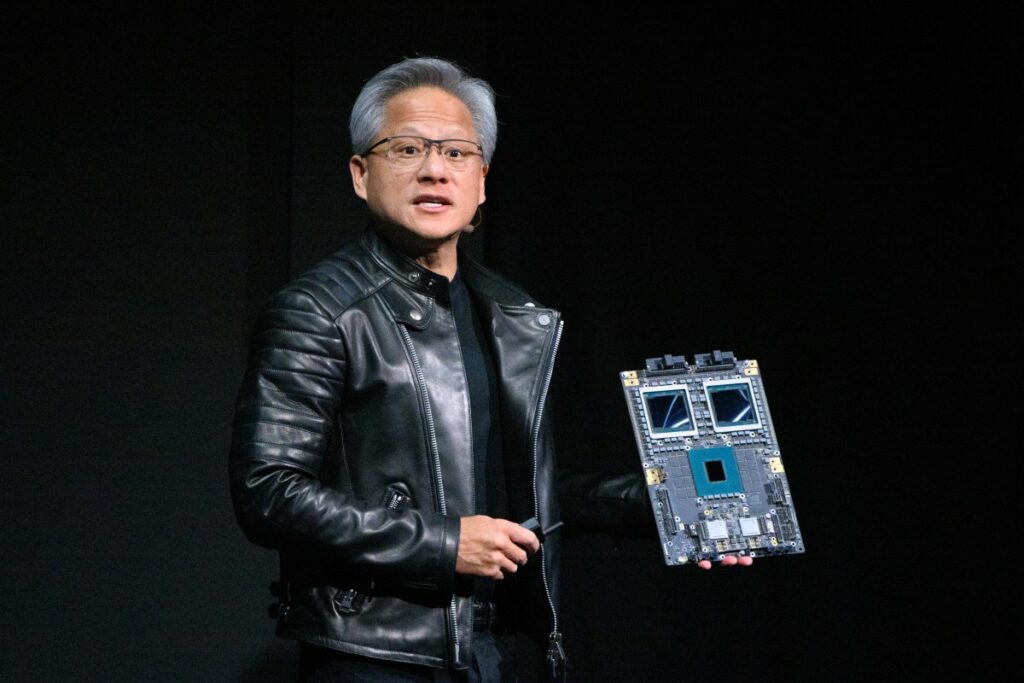

Microsoft, xAI, and Google DeepMind have all agreed to participate in safety evaluations led by CAISI, the Center for AI Safety and Innovation. These evaluations are designed to stress-test models for dangerous capabilities before they reach wide deployment.

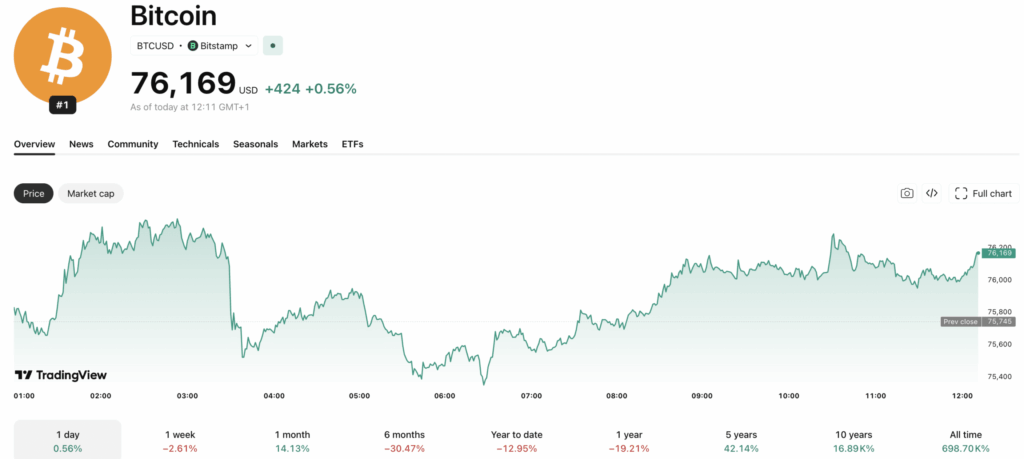

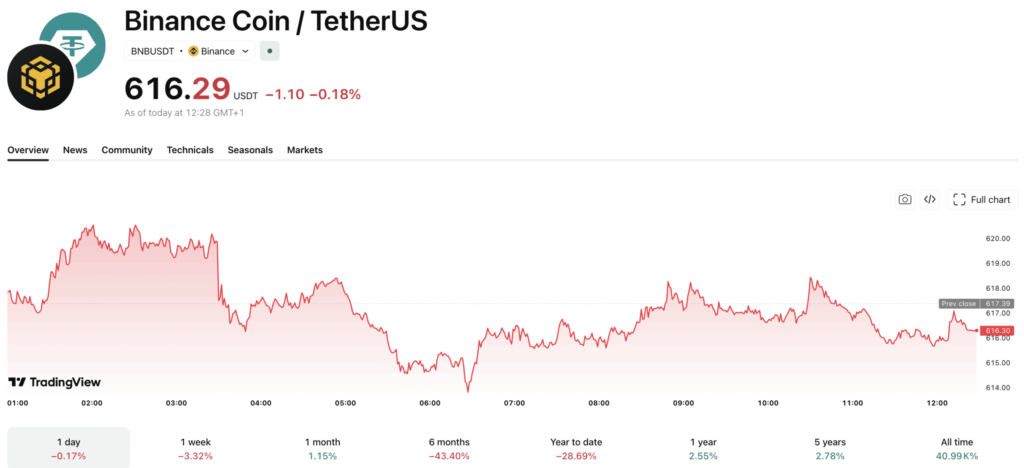

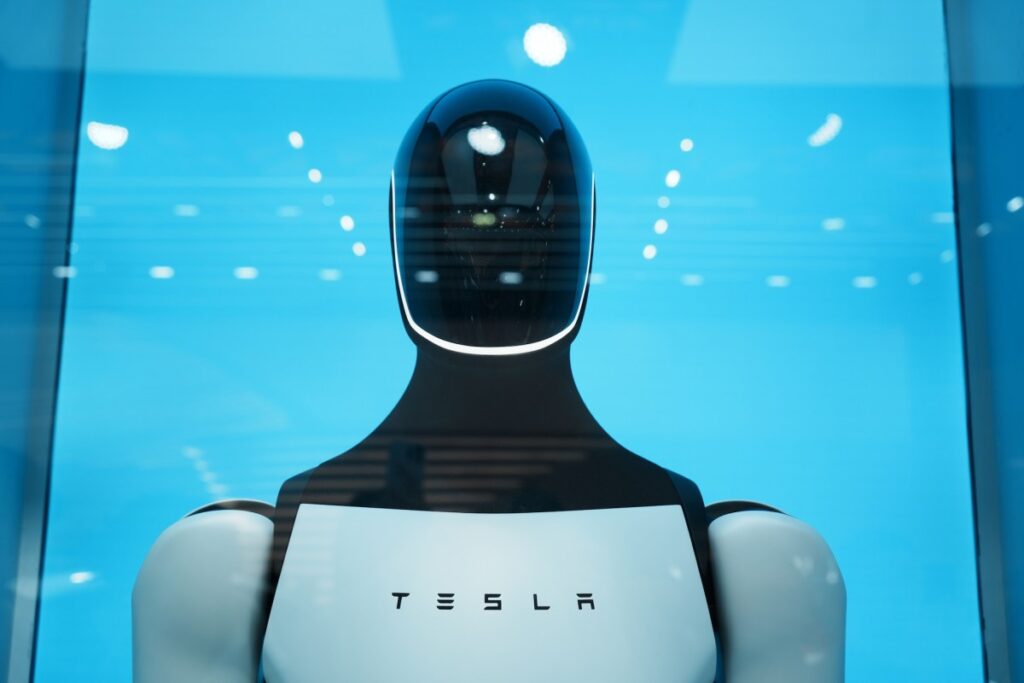

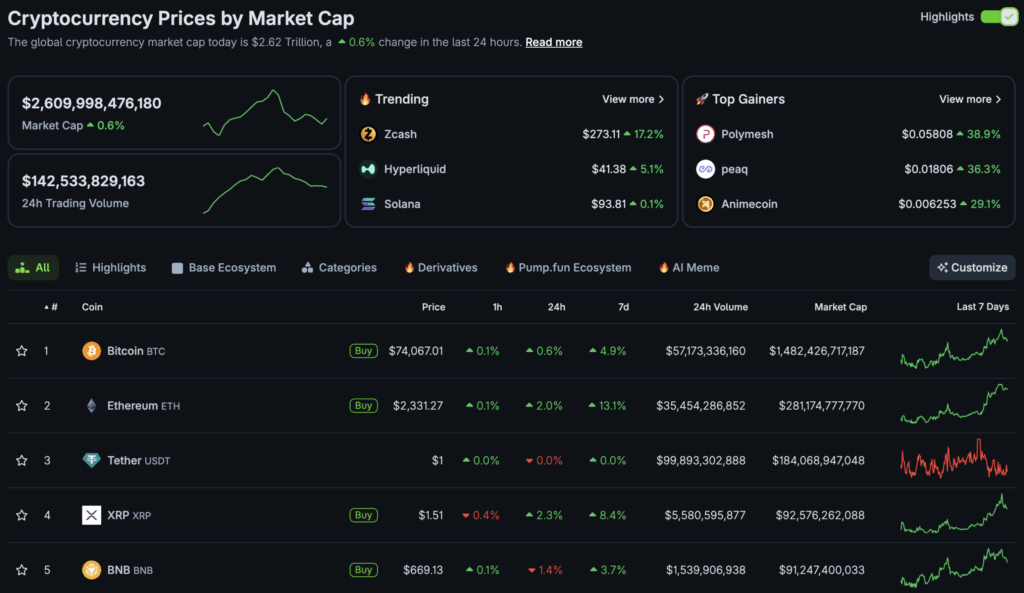

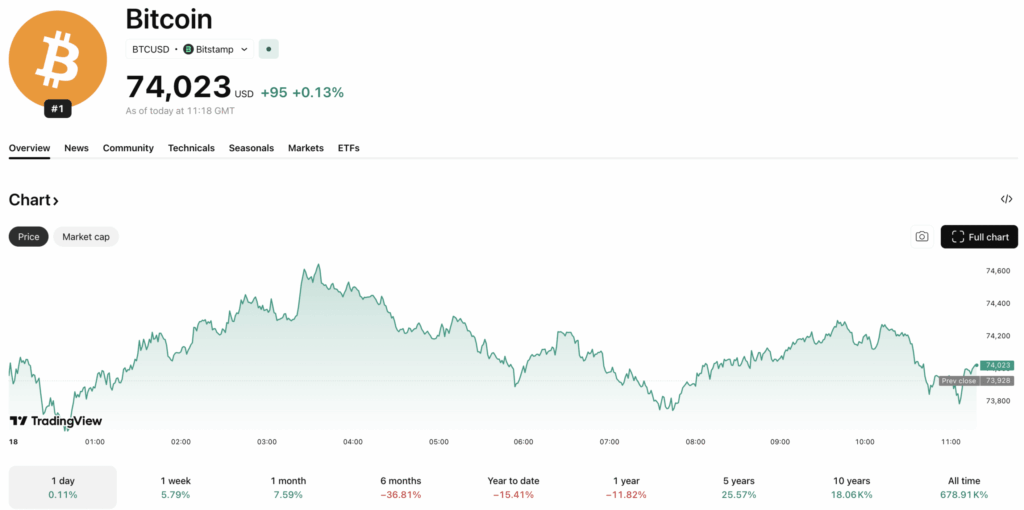

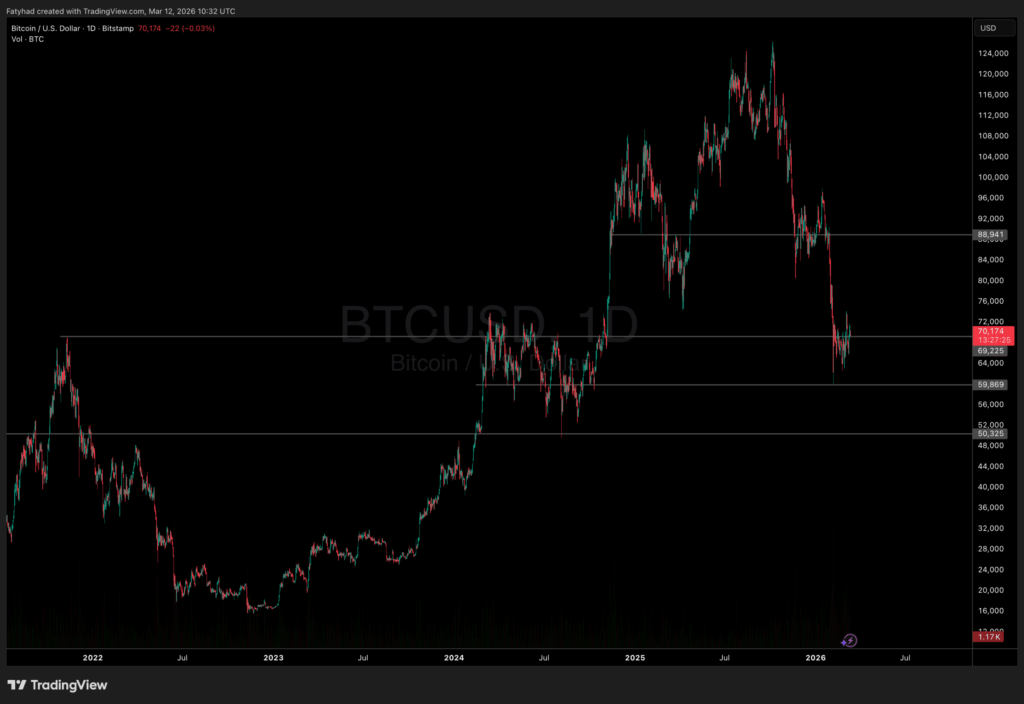

What this means for the tech and crypto sectors

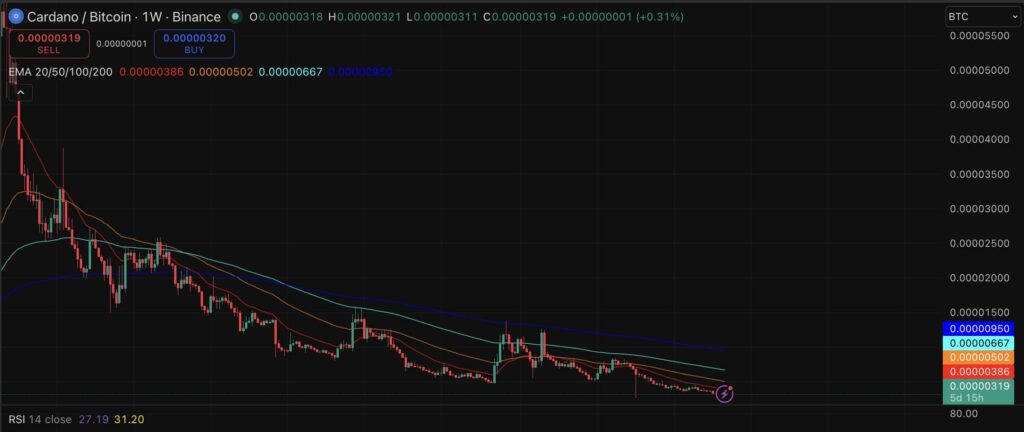

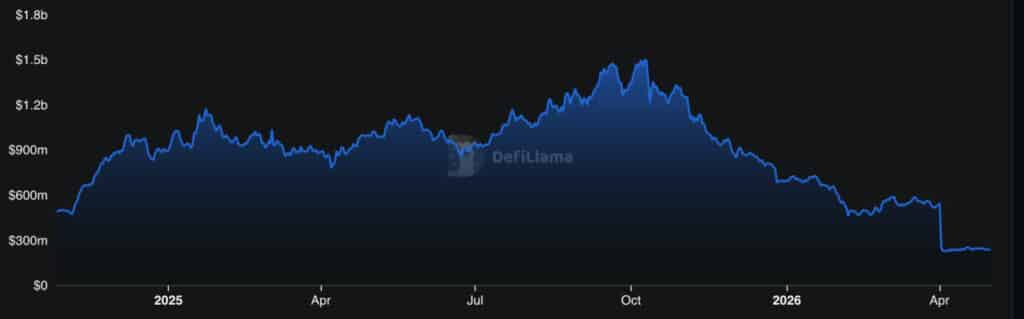

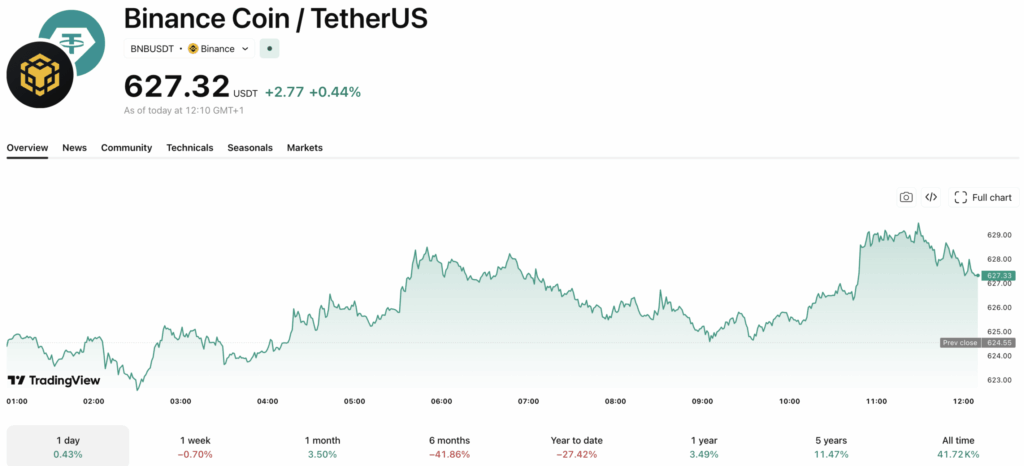

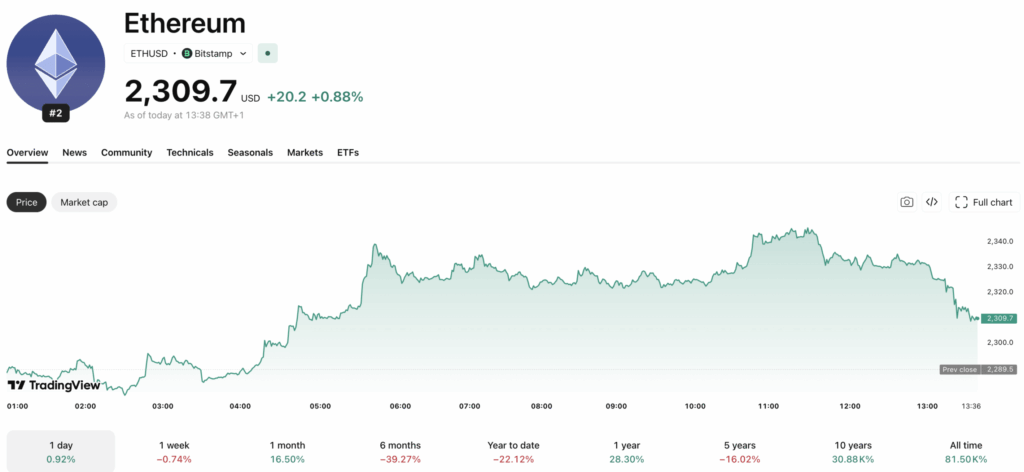

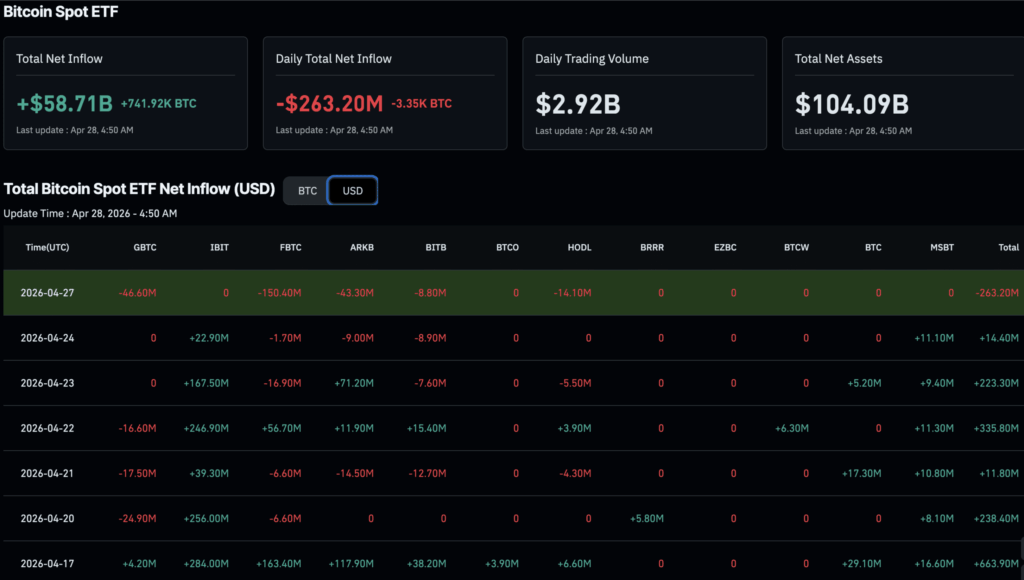

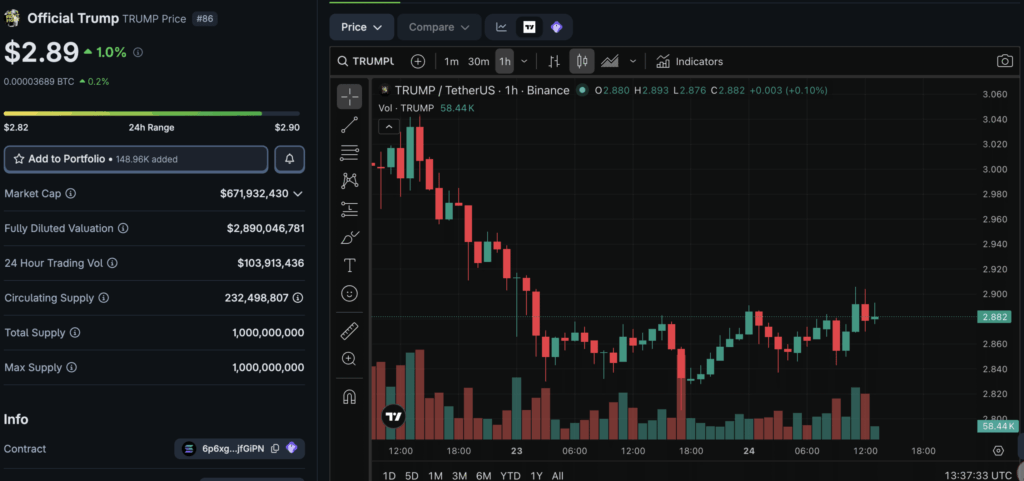

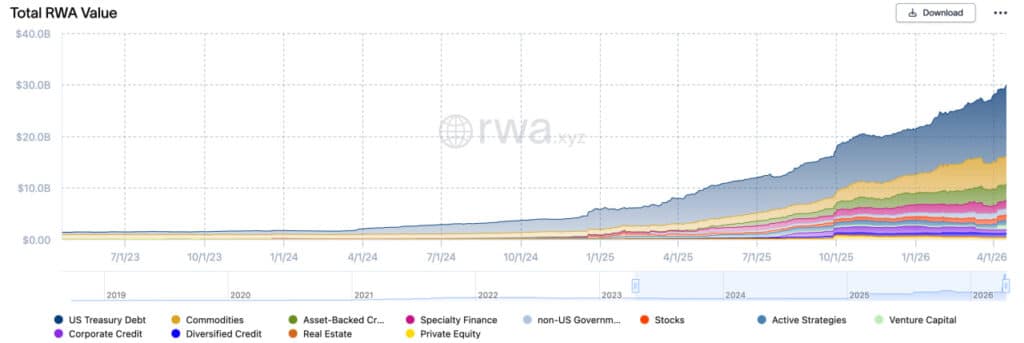

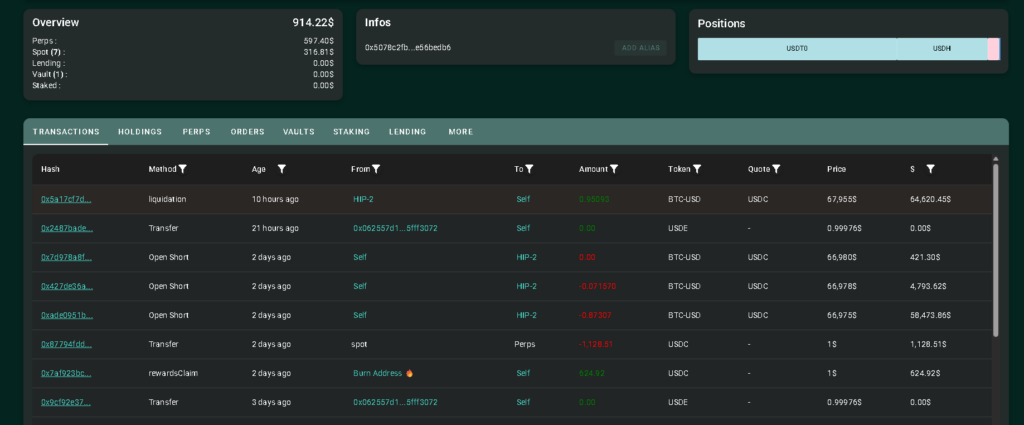

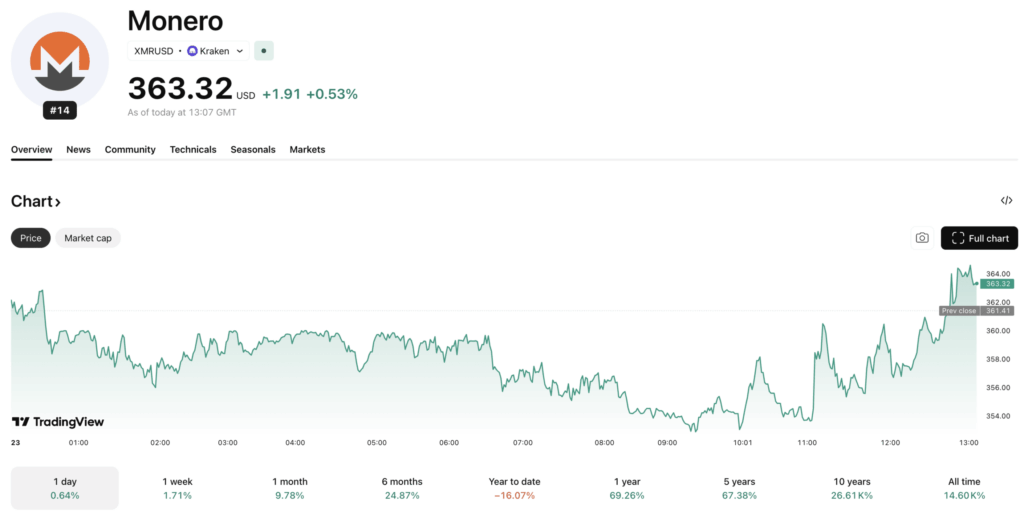

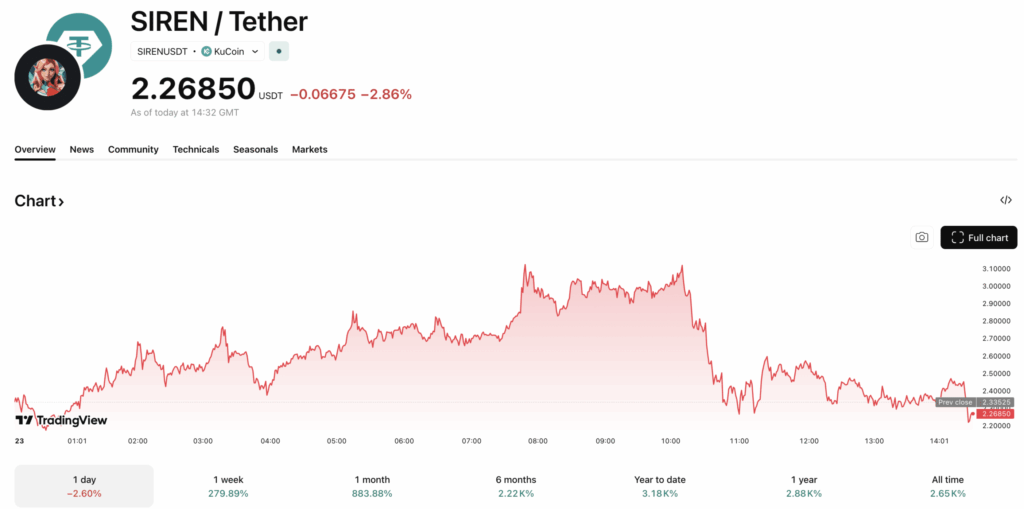

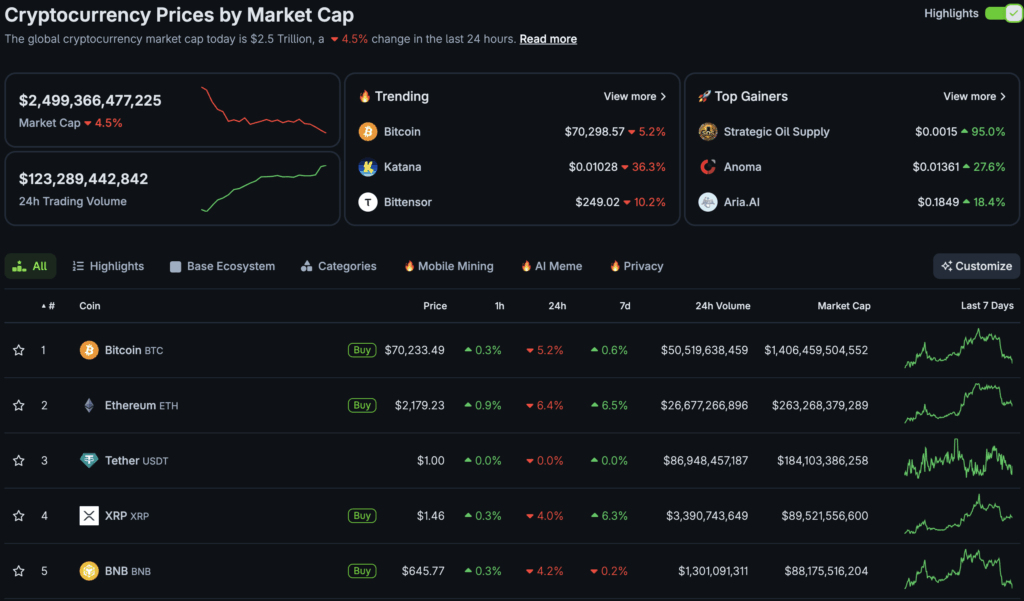

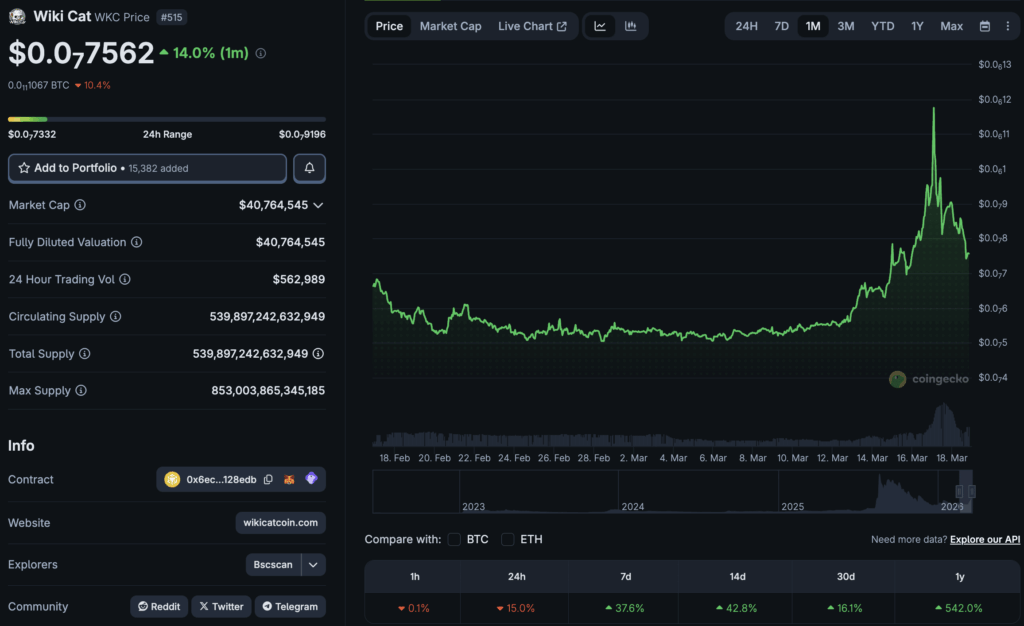

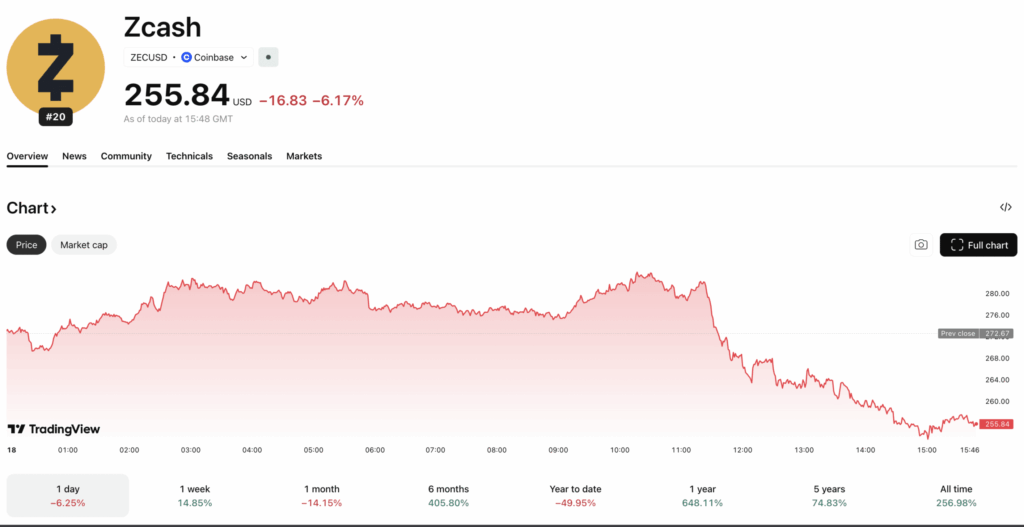

For the crypto and blockchain space, AI-driven trading tools, risk assessment models, and automated compliance systems are increasingly woven into digital asset platforms.

Decentralized protocols that integrate AI models face a particularly thorny question. If a frontier AI model is embedded in a DeFi protocol or used for on-chain analytics, who is responsible for ensuring it meets safety standards? The protocol developers? The AI lab that built the model? The DAO that governs the platform?